Justin Kang

I am a PhD candidate at UC Berkeley (EECS), affiliated with BAIR and advised by Prof. Kannan Ramchandran. My research develops efficient algorithms for ML interpretability and attribution — explaining which input features, training data, and interactions drive model predictions in LLMs and other large-scale models. I am an NSERC Doctoral Fellow and Berkeley Graduate Fellow, and have interned with Google Research, Bosch AI Research, and Intel.

News

- Upcoming invited talk at the Canadian Information Theory Workshop (CITW) 2026 (June 2026).

- Invited talk at Turing.com (May 2026).

- Papers A Positive Case for Faithfulness and An Odd Estimator for Shapley Values accepted to ICML 2026.

- A Positive Case for Faithfulness presented at the ICLR 2026 Trustworthy AI conference.

- Featured in Together AI’s blog post on EinsteinArena — reclaimed #1 on the second autocorrelation inequality leaderboard.

- New blog post: Human-AI Collaboration on Open Math Problems — how I used AI agents to achieve #1 on the EinsteinArena leaderboard.

Older Announcements

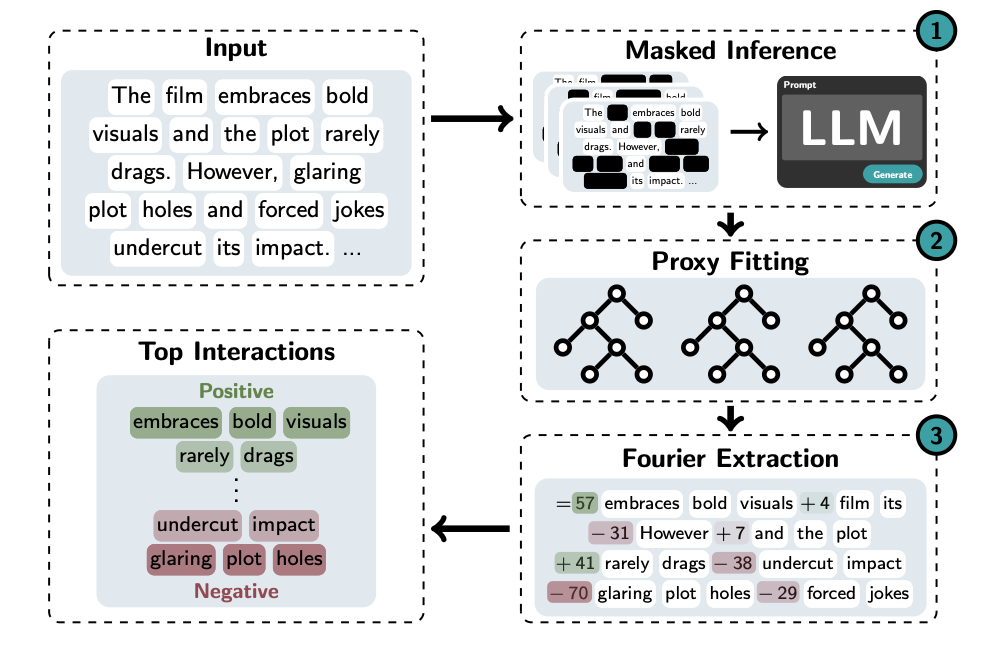

- BAIR blog post: Identifying Interactions at Scale for LLMs — an overview of our SPEX line of work on scalable feature interaction explanations.

- Talk at ITA 2026 graduation day — won the sea award. (slides)

- I was selected as a 2026 Heron AI Security Fellow.

- Papers ProxySPEX (Spotlight) and SHAP-Zero (Poster) accepted to NeurIPS 2025.

- Prof. Bin Yu presented our work at the Flatiron Institute Understanding Deep Learning Models via Interaction Importance.

- SPEX: Scaling Feature Interaction Explanations for LLMs accepted to ICML 2025.

- Presented research on interpreting LLMs at DEVCOM Army Research Lab.

- Joining Bosch AI Research for summer 2025, working on autolabeling and data filtering with Suraj Srinivas, Jorge Piazentin Ono, and Jared Evans.

- Learning to Understand: Identifying Interactions via the Mobius Transform accepted to NeurIPS 2024.

- Interviewed for a Built In article on Federated Learning.

- My paper won the 2024 IEEE Communication Society and Information Theory Society Joint Paper Award.

- Joined Google for summer 2024 as a Student Researcher in the Cloud Platforms Systems Research Group (SRG).

Research Highlights

- Interpretability & Data Attribution: I build scalable tools (SPEX, ProxySPEX) that identify important feature interactions and data attribution in LLMs, achieving up to 20% better faithfulness than prior methods like SHAP, and scaling to 1000+ input features. Check out the shapiq library to try it out!

- Signal Processing → ML: I bring a strong signal processing and information theory perspective to ML problems, which leads to unique algorithmic solutions — including sparse Möbius/Fourier transforms for efficient model explanation.

- Faithfulness of Explanations: I recently led work on evaluating whether LLM self-explanations are faithful to actual model behavior in collaboration with Noah Siegel from Google Deepmind.

- Award-Winning Research: My work on scheduling in massive random access networks won the 2024 IEEE ComSoc & IT Society Joint Paper Award. Joint Paper Award

Selected Papers

(* denotes equal contribution)

A Positive Case for Faithfulness: LLM Self-Explanations Help Predict Model Behavior.

Mayne, H.*, Kang, J.S.*, Gould, D., Ramchandran, K., Mahdi, A., Siegel, N.Y.

ICML 2026 ICLR 2026 Trustworthy AI paperAn Odd Estimator for Shapley Values.

Fumagalli, F., Butler, L., Kang, J.S., Ramchandran, K., Witter, R.T.

ICML 2026 paperProxySPEX: Inference-Efficient Interpretability via Sparse Feature Interactions in LLMs.

Butler, L.*, Agarwal, A.*, Kang, J.S.*, Erginbas, Y.E., Ramchandran, K., Yu, B.

NeurIPS 2025 Spotlight paper poster videoSHAP-Zero explains biological sequence models with near-zero marginal cost.

Tsui, D., Musharaf, A., Erginbas, Y.E., Kang, J.S., Aghazadeh, A.

NeurIPS 2025 paper poster videoSPEX: Scaling Feature Interaction Explanations for LLMs.

Kang, J.S.*, Butler, L.*, Agarwal, A.*, Erginbas, Y.E., Pedarsani, R., Ramchandran, K., Yu, B.

ICML 2025 paper poster videoLearning to Understand: Identifying Interactions via the Mobius Transform.

Kang, J.S., Erginbas, Y.E., Butler, L., Pedarsani, R., Ramchandran, K.

NeurIPS 2024 paper poster videoThe Fair Value of Data Under Heterogeneous Privacy Constraints in Federated Learning.

Kang, J.S., Pedarsani, R., Ramchandran, K.

TMLR 2024 NeurIPS FL@FM 2023 Workshop Oral paper poster video

Industry Experience

- Google — Student Researcher, Cloud Platforms Systems Research Group (Summer 2024)

- Bosch AI Research — Research Intern, working on autolabeling and data filtering (Summer 2025)

- Intel — Non-Volatile Memory Solutions Group, Storage Systems Research Intern (previously)

Education

- PhD in EECS, UC Berkeley (in progress)

- M.A.Sc. in ECE, University of Toronto — advised by Prof. Wei Yu

- B.A.Sc. in Engineering Physics, University of British Columbia